- Home /

- Academy /

- Technical SEO /

- The Importance of Crawl Budgets in SEO

The Importance of Crawl Budgets in SEO

What is a crawl budget?

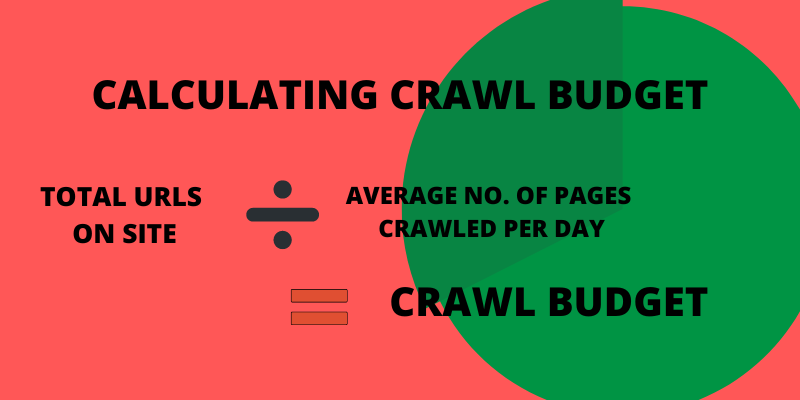

Crawl Budget is the number of pages crawled and indexed by Google bot on a website in a given time frame.

The crawl budget is the frequency with which search engine crawlers (i.e., spiders and bots) crawl your domain's pages.

That frequency is conceived as a compromise between Google bot's efforts to avoid overcrowding your server and Google's overall desire to crawl your domain.

How to optimize the crawl budget?

The main objective of optimizing your crawl budget is to ensure that no crawl budget is wasted. You were fixing the root causes of crawl budget waste. We monitor thousands of websites; if you checked each one for crawl budget issues, you'd quickly notice a pattern: most websites have the same problems.

We frequently encounter the following reasons for wasted crawl budget:

(i) Accessible URLs with parameters:

https://www.example.com/toys/bikes?color=black is an example of a URL with a parameter. The parameter is used to save a visitor's selection in a product filter.

(ii) Duplicate content:

Pages that are highly similar or identical are "duplicate content." Examples include copies of copies, internal search result pages, and tag pages.

(iii) Low-quality content:

Pages with very little content or pages that add no value.

(iv) Broken and redirecting links:

broken links refer to pages that no longer exist, while redirected links refer to URLs that redirect to other URLs.

(v) Incorrect XML sitemap URLs:

Non-indexable pages and non-pages, such as 3xx, 4xx, and 5xx URLs, should not be included in your XML sitemap.

(vi) Pages with a long load time / time-outs:

Pages that take a long time to load or don't load at all harm your crawl budget because it indicates to search engines that your website can't handle the request, and they may adjust your crawl limit as a result.

(vii) A large number of non-indexable pages:

The website contains a large number of non-indexable pages.

(viii) Poor internal link structure:

If your internal link structure isn't set up properly, search engines may overlook some of your pages.

Why is a crawl budget important for SEO?

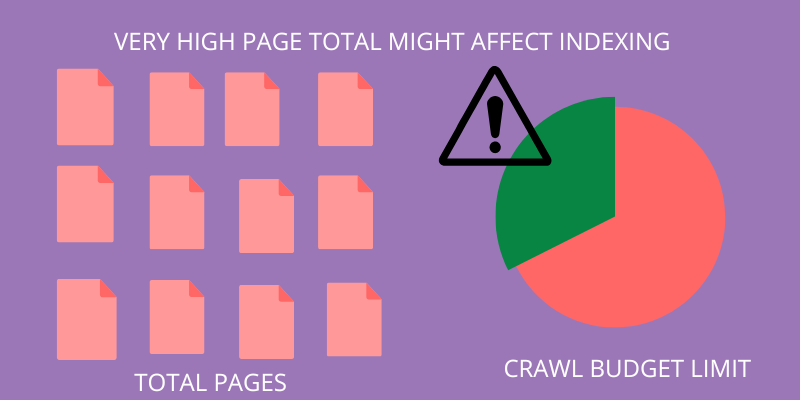

In brief, if Google does not index a page, it will not rank for anything.

If the number of pages on your site exceeds the crawl budget, you will have pages that aren't indexed.

Nevertheless, the vast majority of websites do not need to be concerned about the crawl budget. Google is extremely effective at finding and indexing pages.

However, there are a few instances where you should consider crawl budget:

You run a large website: If you have a website (such as an eCommerce site) with 10,000 or more pages, Google may have difficulty finding them all.

You've just added a slew of new pages: If you've recently added a new section to your site with hundreds of pages, make sure you have enough crawl budget to get them all indexed quickly.

Many redirects: Many redirects and redirect chains deplete your crawl budget.

Following that, here are some easy ways to increase your site's crawl budget.

What are the best practices of crawl budget?

(i) Improve Site Speed

Improving the page speed of your site may result in Google bot crawling more of your site's URLs.

Google claims, "Speeding up a site improves user experience while also increasing the crawl rate."

To put it another way, Slow loading pages waste Google bot's time. However, if your pages load quickly, Google bot will have more time to visit and index your pages.

(ii) Make use of internal links:

Pages with a high number of external and internal links pointing to them are prioritized by Google bot.

You should ideally have backlinks pointing to every page on your site. However, in most cases, this is not feasible.

That is why internal linking is so important.

Internal links direct Google bot to all of the pages on your website that you want to be indexed.

(iii) The architecture of a Flat Website

"More popular URLs on the Internet are crawled more frequently to keep them fresher in our index."

In the world of Google, popularity equals link authority.

That is why you should use a flat website architecture on your website.

A flat architecture arranges things so that all of your site's pages receive some link authority.

(iv) "Orphan Pages" should be avoided

Pages that have no internal or external links pointing to them are considered orphans.

Google has a difficult time locating orphan pages. So, if you want to get the most out of your crawl budget, make sure that every page on your site has at least one internal or external link pointing to it.

(v) Reduce Duplicate Content

Limiting duplicate content is a good idea for a variety of reasons.

Duplicate content, it turns out, can deplete your crawl budget.

This is due to Google's desire to avoid wasting resources by indexing multiple pages with the same content.

As a result, ensure that all of your site's pages contain unique, high-quality content.

This is difficult for a site with 10,000+ pages. However, it is required if you want to get the most out of your crawl budget.

How to increase the website’s crawl budget?

According to Google, there is a strong relationship between page authority and crawl budget. The greater a page's authority, the greater its crawl budget. Simply put, increase the authority of your page to increase your crawl budget.

(i) Update Your Content Frequently (and ping Google once you do):

There isn't much to say about this one; in a nutshell, try to add new unique content as often as you can afford and do it regularly (3 times a week is the best solution if you can't update your site daily and are looking for the optimal update rate).

(ii) Examine Your Server:

Check that your server is operationally sound: pay attention to the uptime and Google Webmaster Tools reports of unreachable pages. Pingdom and Mon.itor.us are two tools I recommend in this situation.

(iii) Keep Load Time in Mind:

Keep in mind that the crawl operates on a budget; if it spends too much time crawling your large images or PDFs, it will not have time to visit your other pages.

(iv) Analyze the Links:

Check the internal link structure of the site to ensure that there is no duplicate content returned via different URLs: the more time the crawler spends figuring out your duplicate content, the fewer useful and unique pages it will be able to visit.

(v) Create More Links:

Increase the number of backlinks from regularly crawled sites.

(vi) Include a Sitemap:

Though it is debatable whether the sitemap can help with crawling and indexing issues, many webmasters report an increased crawl rate after implementing it.

(vii)Make It Simple:

Check that your server is returning the correct header response. Does it properly handle your error pages? Don't leave it up to the bot to figure out what happened; instead, explain it clearly.

What factors limit the crawl budget?

Crawl limit, also known as crawl host load, is determined by a variety of factors, including the website's condition and hosting capabilities. Search engine crawlers are configured to avoid overloading a web server.

The crawl budget will be reduced if your website returns server errors or if the requested URLs time out frequently. Similarly, if your website is hosted on a shared hosting platform, the crawl limit will be higher because you must share your crawl budget with other websites.

Conclusion

Crawl budget was, is, and most likely will continue to be an important factor for your website. As a result, it makes sense to fully utilize it. What steps have you taken to ensure that your website reaps the full benefits of the crawl budget, It is up to the person who wants to make changes to the website? Moreover, it’s been clearly explained and showcased in the above blog.

Start using PagesMeter now!

With PagesMeter, you have everything you need for better website speed monitoring, all in one place.

- Free Sign Up

- No credit card required

The hreflang attribute is used to specify which language your content is in and which geographical region it is intended for.

A search engine spider has a "allowance" for how many pages on your site it can and wants to crawl. This is referred to as a "crawl budget."

The URL redirect also known as URL forwarding is a technique to give more than one URL address to a page or as a whole website or an application.

Uncover your website’s SEO potential.

PagesMeter is a single tool that offers everything you need to monitor your website's speed.